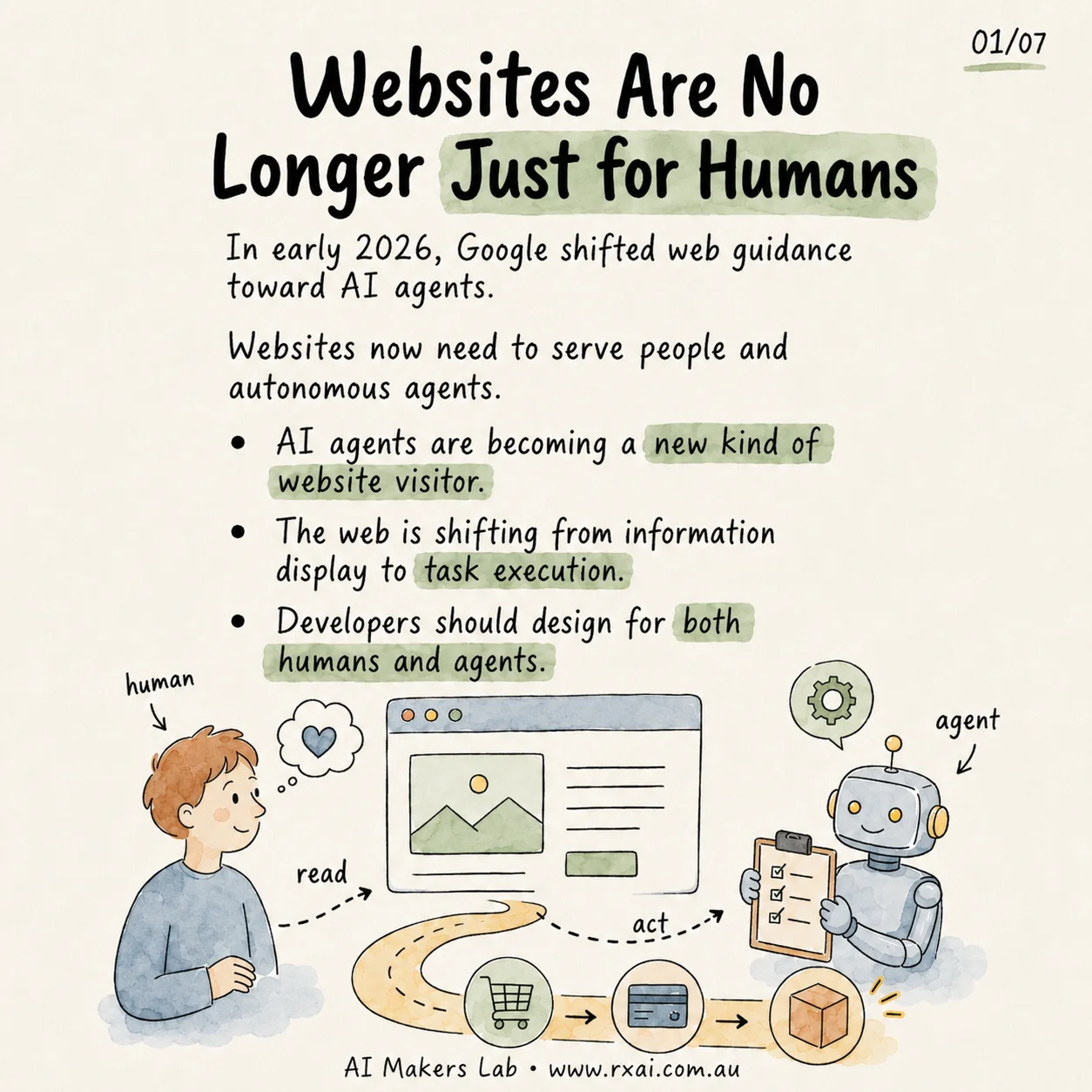

For years, most business websites were planned around one primary visitor: a person with a browser. Search engines crawled the site, but the human was still the one who read the offer, compared the options, filled in the form and decided what happened next.

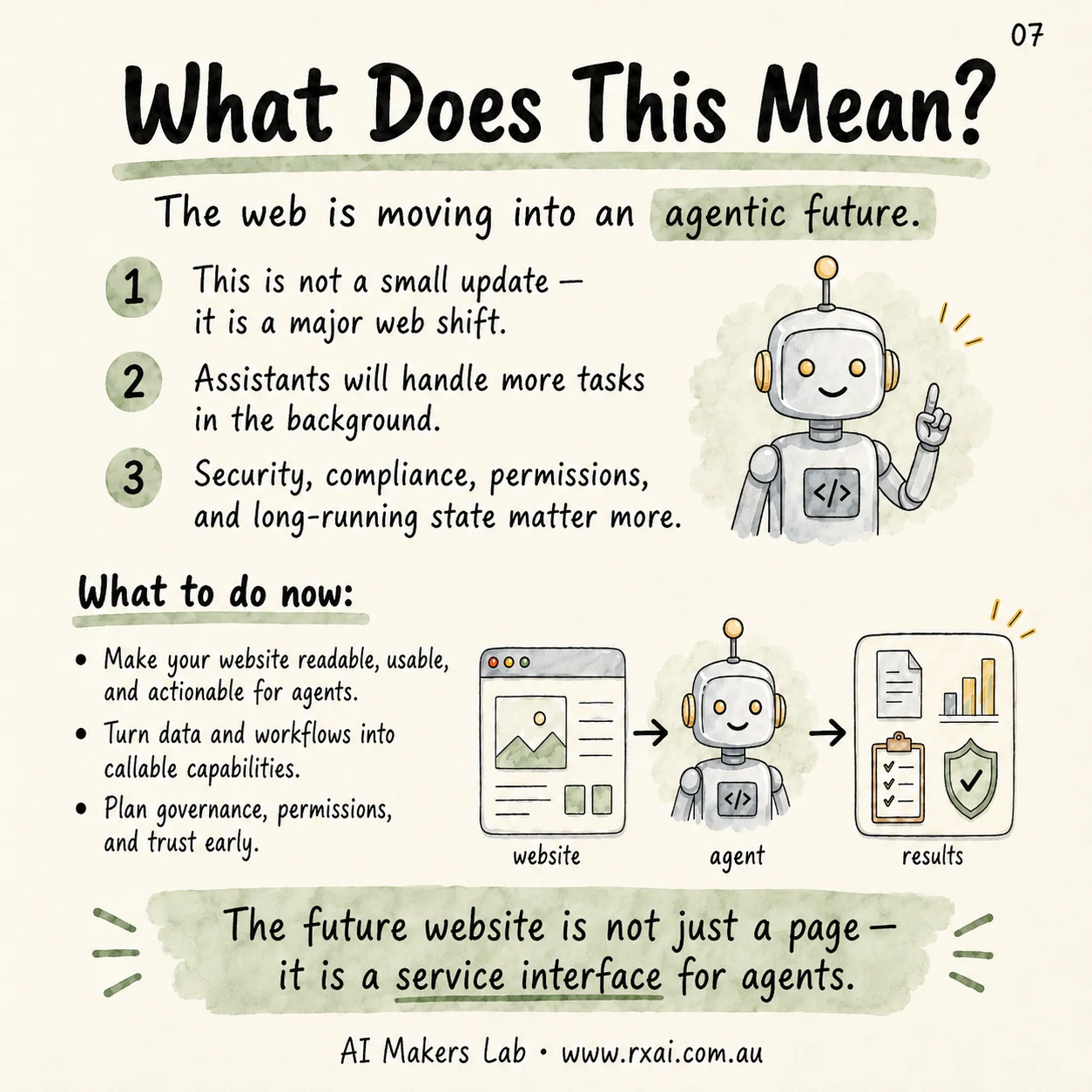

That model is changing. Google's agent tooling and protocol work points toward a web where autonomous AI agents can read pages, connect to tools, compare options and help users complete tasks. A website is no longer only a digital brochure. Increasingly, it is also a machine-readable service interface.

RxAI Insight

Agent readiness is not a cosmetic redesign. It is a visibility, data quality, workflow and trust problem. The sites that prepare early will be easier for both people and AI assistants to understand.

What Changed About Website Visitors?

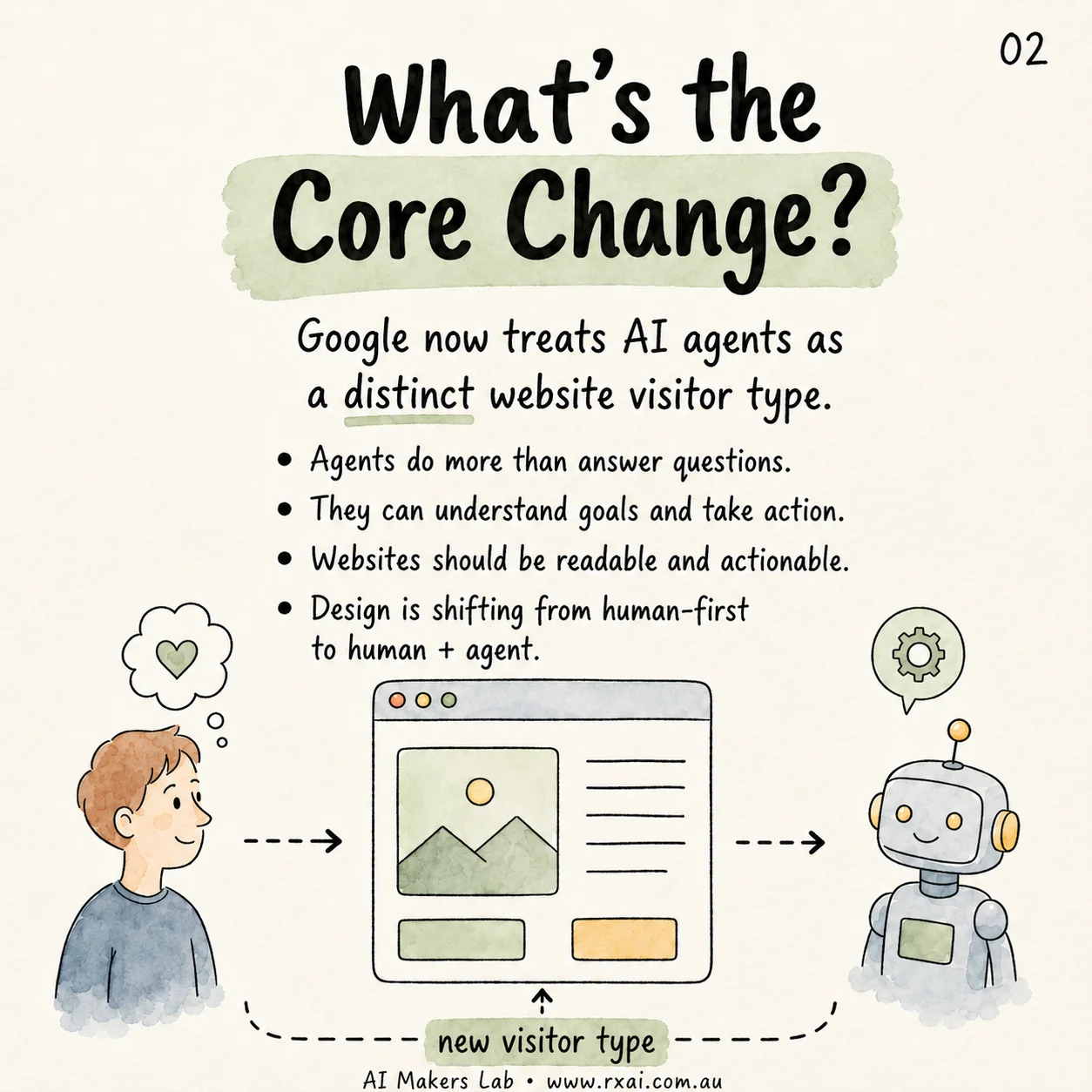

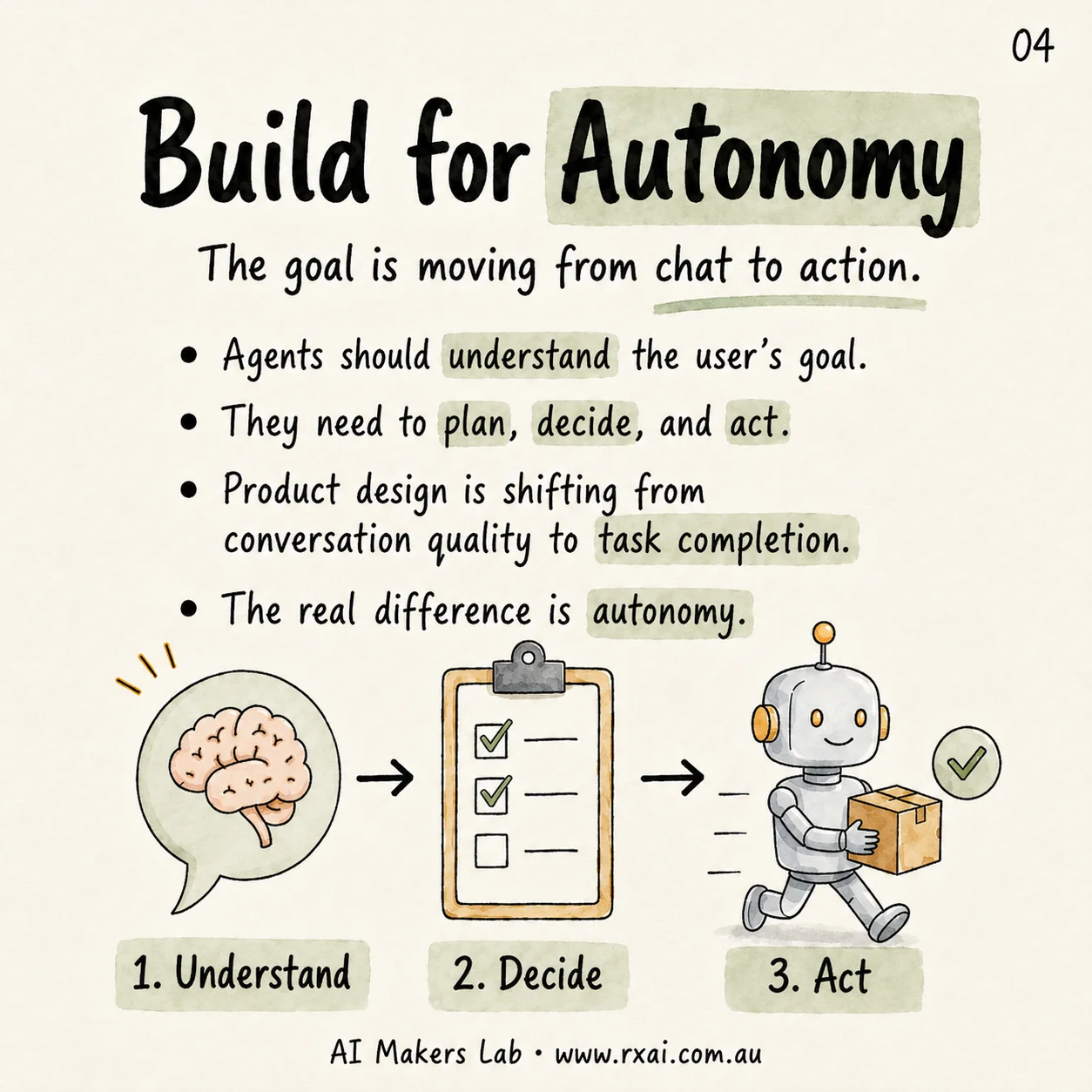

The old website model separated audiences into humans and crawlers. Humans looked at design, copy and trust signals. Crawlers indexed documents so search engines could rank them. AI agents sit in the middle. They need readable content, but they also need enough structure to understand intent, compare choices and decide what action should happen next.

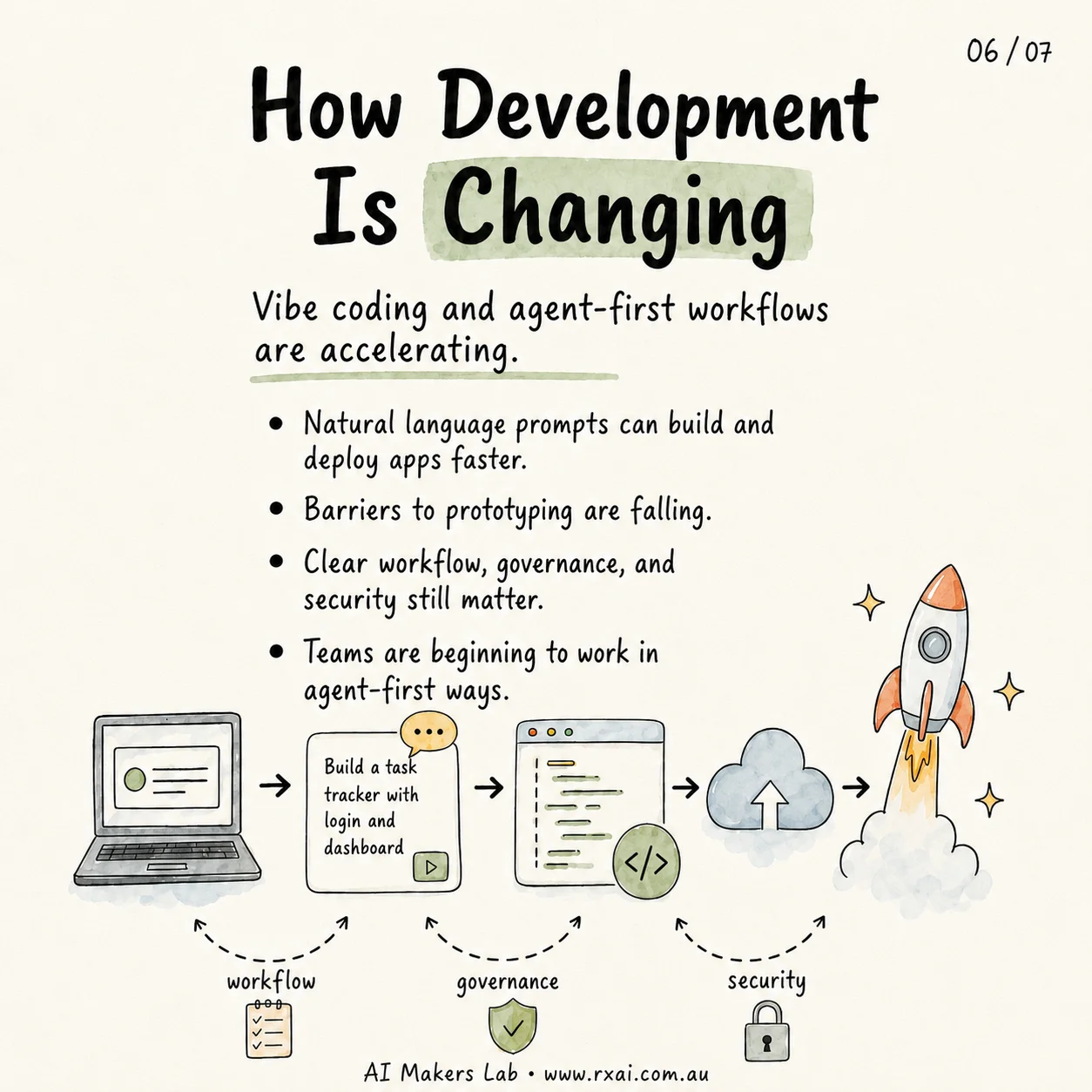

Google's Agent2Agent announcement describes agents that can securely communicate, exchange information and coordinate actions across enterprise systems. Google Cloud's Vertex AI Agent Engine documentation also describes agent development patterns using frameworks such as Agent Development Kit and the Agent2Agent protocol. The signal is clear: agent workflows are moving from experiments into production software architecture.

For a business website, that means the page has to do more than look polished. It has to make the business, services, location, pricing signals, policies, contact pathways and next steps clear enough for an AI system to interpret without guessing.

Why Does This Matter for Traffic and Customers?

Customers are already asking AI assistants to shortlist vendors, explain services, compare options and prepare decisions. As those assistants become more capable, they will increasingly influence which businesses get discovered and which ones are ignored. Your next website visitor may be a person, an AI assistant acting for that person, or both.

If an AI agent can understand your site, it can route a better-fit visitor to your services. If the site is vague, thin, blocked, inconsistent or missing structured signals, the agent may choose a competitor whose offer is easier to parse.

This is where RxAI's AI consulting and GEO services connect directly to business outcomes. GEO is not just about ranking in a blue-link search result. It is about making your business understandable in answer engines, AI summaries and agent-led customer journeys.

The future website is not just a page. It is a service interface for humans and AI agents. RxAI

How Should a Website Be Prepared for LLM Agents?

Agent-ready websites start with the fundamentals that already matter for search, accessibility and conversion. The difference is that the same foundations now need to support machine reasoning and action.

- Clear crawl paths: keep

robots.txt,sitemap.xml, canonical URLs and internal links clean. - Semantic HTML: use proper headings, lists, tables, labels and descriptive links so content has structure.

- Structured data: add JSON-LD for organisations, services, articles, FAQs and contact details where appropriate.

- Answer-ready copy: explain who you serve, what you offer, where you operate and what a customer should do next.

- Action pathways: make booking, enquiry, support and purchase steps explicit rather than buried in vague calls to action.

- Trust and governance: document policies, permissions, privacy and support channels clearly.

These are not only technical SEO tasks. They help an AI agent decide whether your business is relevant, credible and actionable for a user's goal.

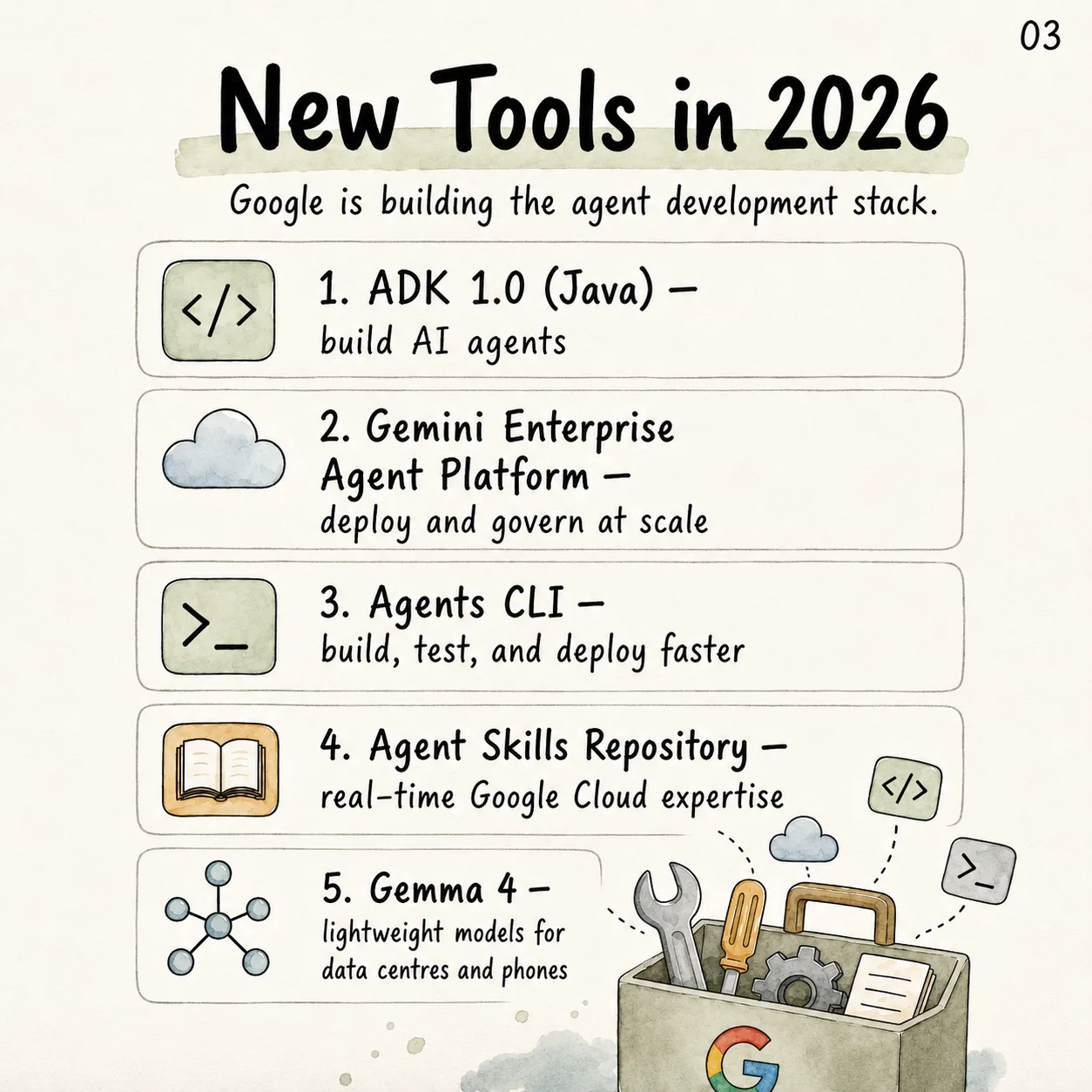

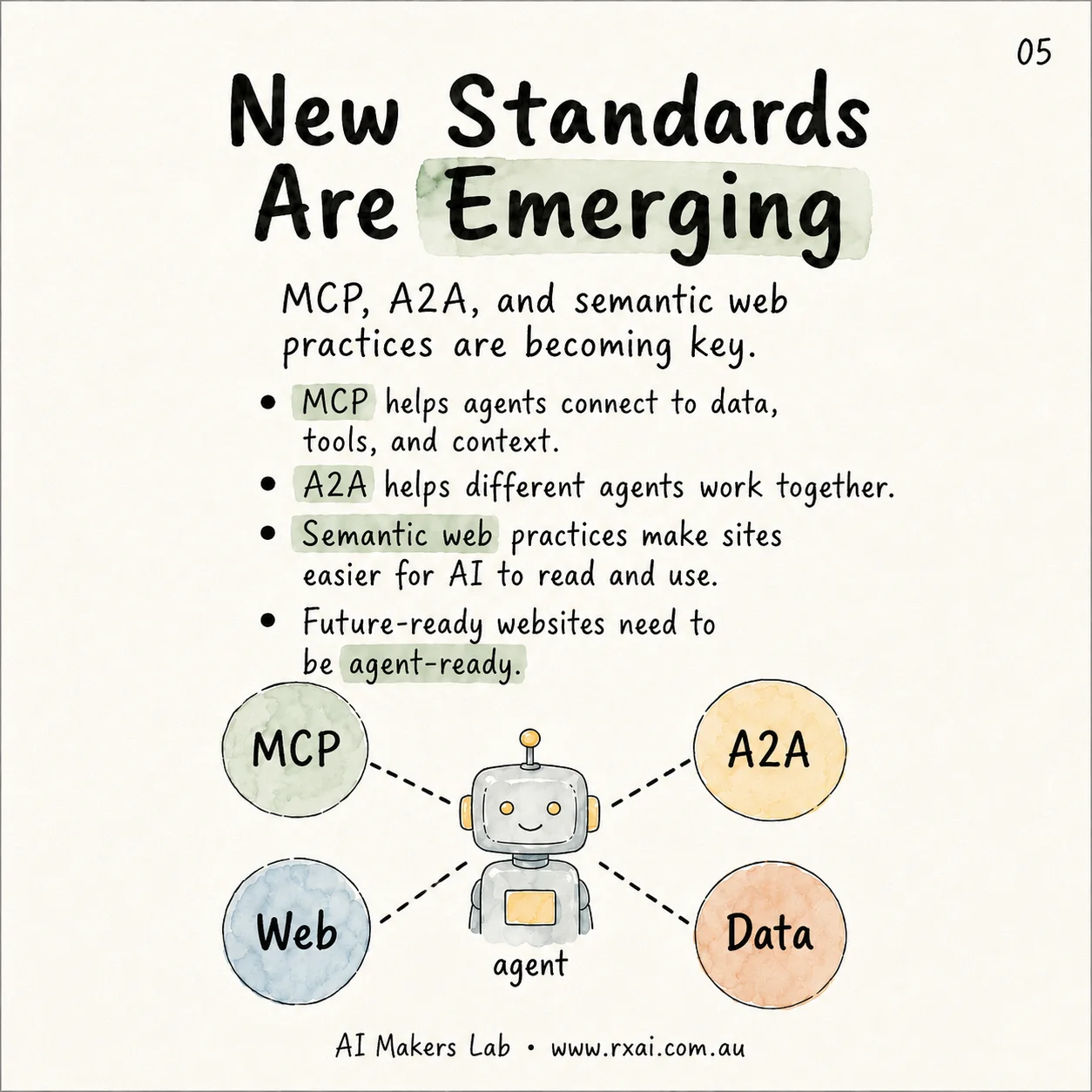

What Standards Should Developers Watch?

Several standards and platform patterns are becoming important for agent-first work. The Model Context Protocol helps agents connect to tools and context. Agent2Agent helps different agents communicate with each other. Semantic web practices help pages expose meaning rather than only visual layout.

Google Cloud's documentation for developing agents describes A2A as an open standard for communication and collaboration between AI agents, and its Vertex AI announcements connect agents with models, enterprise data and workflows. For developers, this is a reminder that the website is only one surface in a larger system. The next layer is capability: what can an agent understand, call, verify and complete?

This does not mean every small business needs a custom agent today. It does mean websites should stop hiding important information inside decorative layouts, image-only text, unsupported scripts or unclear forms. The more explicit your site is, the easier it becomes to connect it to future agent workflows.

What Should Businesses Do Now?

Most businesses do not need to panic. They need a practical readiness plan. Start by asking whether your website can answer the same questions a strong salesperson would answer in the first conversation.

- Audit AI visibility: ask major AI assistants what they know about your business, services and location.

- Fix the crawl layer: confirm your sitemap, robots file, canonical URLs and redirects are clean.

- Strengthen service pages: make each offer specific, structured and easy to quote accurately.

- Add FAQ and schema: turn customer questions into direct answers and valid structured data.

- Map future actions: identify which tasks an agent might one day complete, such as booking, quote requests, product comparison or support routing.

- Plan governance early: decide what agents can see, what they can trigger and where human approval is required.

If you want to understand what AI systems can currently read about your business, start with our guide on how sitemap.xml and robots.txt affect AI discovery. Then book a free RxAI consultation and we can map the highest-value fixes for your website.

Frequently Asked Questions

Not always. Most businesses should begin by improving crawlability, semantic HTML, structured data, service clarity, FAQ content and clear action pathways before considering a full rebuild.

AI assistants can influence discovery by recommending providers, comparing services and routing users toward useful pages. A clear, structured and trustworthy website gives those systems better information to work with.

SEO helps search engines index and rank pages. GEO helps AI systems understand and cite your business in generated answers. Agent readiness goes further by preparing content, data, permissions and workflows for AI systems that may take action.

Start with robots.txt, sitemap.xml, canonical URLs, service-page clarity, FAQ coverage, structured data and contact pathways. These basics make the site easier for both humans and AI systems to understand.

Want This Applied to Your Business?

RxAI offers a free 30-minute consultation to map how these strategies fit your operations. No obligation, no sales pitch.

Book Free Consultation